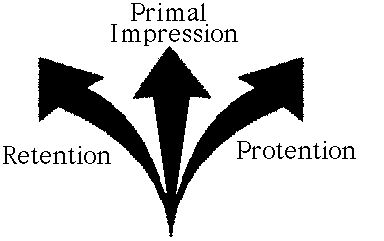

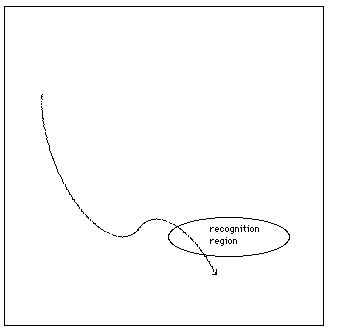

Figure 1: Husserl's triple structure of time consciousness.

[2] A moment's reflection can generate an interesting puzzle about time consciousness. Both the song and my awareness exist in time. They are like parallel tracks in the same time frame. However, as we standardly think about time, only the present and what is in it actually exist; thus, only a momentary stage of the song, and a momentary stage of my awareness, actually exist at any given time. The other stages did exist, or will exist, but do not exist now. It seems plausible, then, that my awareness at this moment of the music must be an awareness of the song now, i.e., of the current, momentary stage of the stage. The other stages do not exist, so how could I be aware of them? And here's the puzzle: if at any given time I am just aware of that part of the song that is playing now, how can I ever be aware of the song as an integrated piece that takes place over time?

[3] It is not being claimed that this puzzle is a deep metaphysical difficulty, like Zeno's paradoxes. Rather, it is a provocative way to point out an interesting theoretical domain. It is an entry point into serious reflection on the nature of time conciousness. How is time consciousness possible?

[4] Presumably, an understanding of time consciousness should be part of an understanding of the nature of consciousness in general. Our philosophical and scientific tradition offers at least two major approaches to the study of mind and consciousness: phenomenology and cognitive science. These approaches are patently different in almost every important respect: they have their own literature, practitioners, professional meetings, vocabulary, methods, etc. They have fundamentally different orientations: phenomenology proceeds from the assumption that the study of mind must be rooted in direct attention to the nature of (one's own) experience, whereas cognitive science proceeds from the assumption that a genuine science of mind must be rooted in the observation of publicly available aspects of minds of others.

[5] To which of these approaches - phenomenology or cognitive science - should we turn for an understanding of time consciousness? They are sometimes regarded as direct competitors. Philosophical arguments have been adduced to demonstrate that genuine knowledge of consciousness can only be achieved by one method or the other. The position taken here, however, is that these conclusions are unwarranted; phenomenology and cognitive science should be regarded not only as compatible, but as mutually constraining and enriching approaches to the study of mind. This paper attempts a kind of "proof by example" of this position. It will demonstrate how both phenomenology and cognitive science can shed light on the phenomenon of time consciousness, and how their respective contributions can inform each other. The claim will be that only phenomenology and cognitive science in conjunction can adequately resolve the paradox of time consciousness described above.

[7] The upshot of the paradox of time consciousness is clear. When I am aware of something temporal as temporal, there is more to my awareness than simply a succession of momentary awarenesses of momentary stages. When I hear a melody, I don't simply hear this note now and another note a moment later. Something more is going on - but what could that be?

[8] An all-too-obvious move is to suggest that what we need, over and above the succession of momentary awarenesses, is an additional awareness of all the stages of the temporal object as comprising a single temporally extended entity. Husserl, attributing a view along these lines to Meinong, puts it this way: "there must be an act which embraces, beyond the now, the whole temporal object" (Husserl 1966: 226; quoted by Brough 1989: 266). Since this additional awareness cannot, by its very nature, take place until all the momentary stages have actually occurred, it must take place at the end of the succession of awarenesses.

[9] Husserl demonstrated that this view cannot be correct, however. For one thing, it is hard to see how this final all-embracing awareness can actually take place, for there is nothing left for it to embrace. By the time the final act takes place, the momentary stages of the object itself and the momentary awarenesses of those stages have been and gone; they are fully in the past, and in that sense no longer exist. One might attempt to remedy the situation by stipulating that one retains memories of the momentary stages of the object, and the final awareness combines those memories into an awareness of the entire temporal object. However, even as amended this way, the Meinongian suggestion falls afoul of another distinctively phenomenological objection. If you attend to your experience in being aware of a temporal object, you find yourself aware of that object as temporal even as time unfolds. When you listen to a melody, you don't have to wait around until the melody is finished in order to hear it as a melody.

[10] The key to solving the puzzle is to realize that, as Husserl put it, "consciousness must reach out beyond the now" (Husserl 1966: 227; quoted by Brough 1989: 267). Every momentary awareness of the temporal object must be an awareness of more than just the corresponding momentary stage of the temporal object. Consciousness must at that moment somehow relate the momentary stage of the temporal object to other stages, i.e., to what went before and what is to come. Husserl's theory of time consciousness is a sophisticated account of how awareness can build the past and the future into the present. It is thus a detailed theory of what is required for there to be any time consciousness (of the sort we have) at all.

[11] The basic insight is that every momentary stage or "act" of time consciousness actually has a triple structure (see figure 1). The centerpiece is a "primal impression" of the momentary stage of the temporal object. This primal impression corresponds roughly to the momentary awarenesses that figured in the Meinongian account. It simply takes in or "intends" what is now, in a way that is completely oblivious of what went before and what is to come. However, on Husserl's theory, primal impressions do not stand alone; they are only part of the triple structure that is essential to any individual act of time consciousness. Put differently, primal impressions are not awarenesses in their own right; they are essential ingredients or "moments" of full-blooded awarenesses of temporal objects.

[12] The other ingredients of time consciousness are retentions and protentions. These are also intendings of stages of the temporal object. What distinguishes them from primal impressions, however, is that retentions are intendings of past stages, whereas protentions are intendings of future stages. For a crude illustration, imagine hearing the tune "Yankee Doodle," and consider your awareness of the tune at the time of the second note, i.e., corresponding to the "-kee." Roughly speaking, on Husserl's account, your perceptual time consciousness of the tune as a whole at that very moment has a triple structure of intendings: a primal impression of the second note ("-kee"), a retention of the first note ("Yan-"), and a protention of the third note ("Doo-").

Figure 1: Husserl's triple structure of time consciousness.

[13] Husserl's theory of the triple structure of time consciousness clearly accounts, in broad outline, for how my awareness now can build in the past and the future, and thus be an awareness now of the temporal object as temporal. However, it does so at the cost of invoking what seem to be rather mysterious beasts, retentions and protentions. Why mysterious? Well, go back to the original paradox of time consciousness. What is past has disappeared forever, and what is future has not happened yet. In an obvious sense, both past and future don't exist now. The mystery is: how can retentions and protentions "intend" what doesn't exist? Or rather, what are retentions and protentions, such that it makes some kind of sense to say that they do this?

[14] Husserl explores the notions of retention and protention, and their role in time consciousness, in great depth and detail, but it is not clear that he succeeds in clarifying these notions enough to remove the sense of mystery. The suggestion made in this paper is that this mystery can only really be resolved once Husserl's theory is fleshed out by reference to actual models, from cognitive science, of the causal mechanisms underlying time consciousness. Before looking at those models, however, more of Husserl's account needs to be on the table. The following is a simplified and synthesized list of properties that Husserl ascribed to retention:

[16] Protention is basically the symmetrical opposite of retention, directed towards the future rather than the past. The major difference, of course, is that whereas the past has happened, and in that sense is fixed, the future is still open; there is certainly no way in which one can know what it will be. How then, one might ask, can protention - intendings of the future, modeled on perception - possibly exist? This really is very mysterious. Husserl had relatively little to say about it, except to suggest, in a quite unsatisfactory manner, that protention is somehow both determinate and open at the same time.

[18] Cognitive scientists try to understand the causal mechanisms responsible for a given phenomenon by producing and analyzing models. There are at least two fundamentally different kinds of models, corresponding to what is arguably the deepest division between kinds of research in cognitive science. That is the division between the computational approach, on the one hand, and the dynamical approach on the other. Computationalism has dominated cognitive science since the discipline emerged in the fifties and sixties, and is still the mainstream or orthodox approach (see Pylyshyn 1984). It takes cognitive systems to be computers, in the quite strict and literal sense of machines which manipulate internal symbols in a way that is specified, at some level, by algorithms. Computers in this sense are exemplified by Turing Machines. Cognition, according to this approach, is the internal transformation of structures of symbolic representations. A classic example of mainstream computational modelling is the SOAR framework developed by Newell and others, within which detailed models of a wide variety of aspects of cognition have been developed (Newell 1991). Since cognition is taken to be the behavior of a computer, computer science provides the general conceptual framework within which cognition can be studied. Standing behind this approach to cognition is a long philosophical tradition, stretching from Plato through Descartes, Leibniz and Kant, and culminating in contemporary philosophers such as Fodor, which assumes that the essence of mind is to represent and reason about the world.

[19] What do computational models of auditory pattern recognition look like? At the most generic level, they work by applying recognition algorithms to symbolic encodings of the auditory information. This implies a two-stage process; one in which the auditory signal is encoded, and another in which the encoded information is analysed. As a consequence, there is a structural feature which all computational models share: the buffer. This is a kind of temporary warehouse in which the auditory information is stored in symbolic form as it comes in, in preparation for the recognition algorithms to do their work. This is achieved by sampling the auditory signal at a regular rate, i.e., using an independent clock to pick out points of time and measuring the signal at those times, then storing in the buffer symbolic representations of those measurements together with the time they were taken.

[20] The dynamical approach to cognitive science takes cognitive systems to be dynamical systems rather than computers.(2) In this context, a dynamical system is a set of quantities evolving interdependently over time. The solar system is the best-known example of a dynamical system; it consists of the positions and momentums of the sun and planets, all of which are changing all the time, in such a way that the current state of every quantity affects the way every other one changes. In cognitive science, most (though by no means all) dynamical models of cognition are neural networks, in which the set of interdependently evolving quantities are the activity levels of the neural units. According to the dynamical approach, cognition is not manipulation of symbols but rather state-space evolution within a dynamical system, and the emergence of order and structure within such evolution. The relevant conceptual framework is that of dynamics (dynamical modeling and dynamical systems theory), which may well be the most widely used and successful explanatory framework in the whole of natural science. Standing behind the dynamical approach is an alternative philosophical tradition, from Heidegger and Ryle earlier this century to contemporary figures such as Dreyfus and Varela, which takes the essence of mind to be ongoing active engagement with the world.

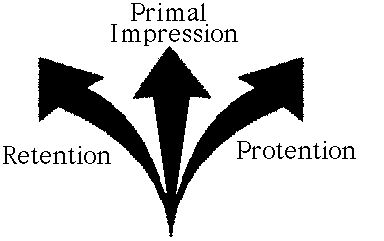

[21] What do dynamical models of auditory pattern recognition look like? At the most generic level, they work by having the auditory pattern influence the direction of evolution within the dynamical system. It will be useful to describe one such dynamical model in just a little detail. This is the Lexin model for auditory pattern recognition developed by Sven Anderson and Robert Port (Port, McAuley, and Anderson 1996). It is a neural network in which each of the system variables (neural units) changes its activity level as a function of the current activity of the other variables and the connections between them in a way that is determined by differential equations. For the general architecture of the network and the form of the governing differential equations see figure 2.

[22] There are many subtleties about the particular organization and behavior of this network, but for current purposes we only need to understand the principles of its operation at the most generic level. The total state of the network at a given time is the set of values, at that time, of each of the neural units. This total state corresponds to a point in a geometric space of all possible total states. Every neural unit is continuously changing its activity under influence from other units, and so the total state of the system is itself continuously changing, or moving on a particular trajectory through the space. This trajectory can be diagrammed, in a very schematic way, by a curve on a plane. The shape of this curve can be affected by external factors. In other words, inputs to the system influence the direction of change in the system. If these inputs correspond to particular segments of the auditory pattern, then the curve will reflect not only the intrinsic tendencies of the system, but also the distinctive structure of the auditory pattern. In the Lexin model, particular sounds turn the system in the direction of unique locations in the state space, and if the system is exposed to nothing but a given sound, it will eventually end up just sitting at that point. When exposed to a sequence of distinct sounds, however, the system will head first to one location, then head off to another, waving and bending in a way that is shaped by the sound pattern, much as a flag is shaped by gusts of wind. In more technical terms, particular input frequencies constitute parameter settings which fix the location of unique point attractors; when the frequency changes, the system bifurcates, and the trajectory is fixed by this sequence of bifurcations ("attractor chaining").

Figure 2: The Lexin dynamical model of auditory pattern recognition developed by Robert Port and Sven Anderson. On the right is a schematic depiction of the neural network architecture. On the left is the general form of the differential equations which govern the evolution of individual variables and hence change in the system as a whole.

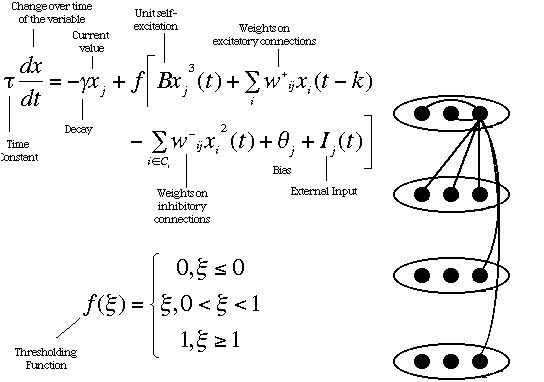

[23] Now, how can an arrangement of this kind be understood as recognizing patterns? Recognizing a class of patterns requires discriminating those patterns from others not in the class, and this must somehow be manifested in the behavior of the system. One way this can be done is by having the system arrive in a certain state when a familiar pattern is presented, and not otherwise. That is, there is a "recognition region" in the state space which the system passes through if and only if the pattern is one that it recognizes. Put another way, there is a region of the state space that the system can only reach if a familiar auditory pattern (sounds and timing) has influenced its behavior. Familiar patterns are thus like keys which combine with the system lock to open the door of recognition.

[24] The remarkable fact is that dynamical systems can in fact be configured so as to exhibit exactly this kind of response. This is what the Lexin model does with a small repertoire of relatively simple patterns. If this model is along the right lines, this is what our own auditory systems do with the vast repertoire of complex and subtle patterns that we can discriminate from the sea of noise around us.

Figure 3: A schematic depiction of the state space of the Lexin model, and a behavioral trajectory. Each point along the curved line represents a state of the system at a time. The shape of the curve is determined by a combination of the system's intrinsic behavioral tendencies and external influences. In this case, the system passes through the recognition region and thereby recognizes the external influences as constituting a familiar pattern. A successul auditory pattern recognition system of this type is one that will pass through the recognition region if, and only if, a familiar pattern is presented.

[26] An obvious and superficially plausible objection to the computational approach in general has always been cognition does not seem to be a matter of manipulation of symbols inside the head. When one introspects on one's own thought processes, one generally doesn't observe the symbols and transformations that are alleged to constitute those processes. The standard way in which mainstream computational cognitive science has dealt with this objection is to suggest that the posited computational processes are subconscious, and so the phenomenology does not directly bear on the issue. This strategy is not simply a matter of self-serving selection of the kinds of evidence to be taken into account. There is no reason to assume that the phenomenology of a mental process should somehow give direct insight into the nature of the causal processes that subserve that phenomenology. By analogy, the picture on television gives no insight into the causal mechanisms that give rise to that picture (except, perhaps, in the very special case in which the program on television happens to concern how televisions work). In a certain sense, we "see through" the screen to the content that is conveyed by the television. In a similar way, we "see through" the mechanisms responsible for our cognitive processes.

[27] Unfortunately, computationalists have, by and large, gone on illegitimately to assume a general license to ignore phenomenological data of any kind whenever it is convenient to do so. Their models are generally evaluated only by whether they match the measured performance data, and not by whether they make any sense in terms of our own observations of our experience. In the long run, however, this attitude cannot be sustained. Phenomenological evidence - both the everyday kind and the theoretically sophisticated deliverances of Husserl and others - is one source of relevant constraint among others. Like any other data, phenomenological evidence must be treated with care, but no theory of mind and cognition would be complete unless it could properly integrate causal mechanisms with phenomenology. Although phenomenological observation cannot, in general, be presumed to give direct insight into the nature of the mechanisms responsible for experiential phenomena, there must ultimately be an account of why those phenomena are the way they are given (at least partly) in terms of the nature of those mechanisms. For this reason, the nature of our experience should be regarded as having the potential to constrain our theories and models in cognitive science.

[28] Now, the phenomenological observation that is relevant here is simply a relatively obvious point on which Husserl relied when criticizing the Meinongian theory of time consciousness. Recall that, according to that theory, time consciousness is made possible by the presence of an additional mental act which surveys all the momentary stages and synthesizes them into a single apprehension of the object as a temporal whole. This act can only take place once all the momentary stages have occurred. However, attending to the nature of the experience involved, it seems clear that we do not have to wait until the temporal object is over before we can perceive it as a temporal object. I don't have to wait until the end of "Smells Like Teen Spirit" before experiencing it as the song that it is; indeed, I don't have to wait until the end of even a single bar. After perhaps an initial delay, every sound, on a moment by moment basis, is perceived as part of that tune. In short, time consciousness unfolds in the very same time frame as the temporal object itself.

[29] How does this observation bear on the difference between dynamical and computational models? Well, notice that computational models have the same temporal structure as the Meinongian account of time consciousness. In computational models, the auditory pattern is first encoded symbolically and stored in a buffer. Only when the entire pattern has been stored in the buffer do the recognition algorithms go to work; and it is only when those algorithms have done their work that the system could be said to have any kind of awareness of the pattern as such. It follows, therefore, that computational models are open to the very same objection as the Meinongian account. The fact is, in order to recognize a tune as such, we don't (as computational models suggest) have to wait until the end of the tune. I hear the tune as a tune even as the tune is playing.

[30] On the other hand, dynamical models exhibit exactly the kind of simultaneous unfolding that phenomenological observation suggests. The state of the system is always changing, and from the onset of the very first sound, the system is evolving in a direction which reflects both the auditory pattern itself and the system's familiarity with that pattern. In other words, the system begins responding to the pattern as the pattern that it is from the moment it begins.

[31] Clearly, then, dynamical models fit much better with the nature of our experience than do computational models. Other things being equal, this gives us good reason to prefer dynamical models over computational models; and if we accept this, then we have accepted that phenomenology can substantially constrain cognitive science.

[32] How might computationalists respond? One possibility is to deny or reinterpret the phenomenological evidence. Perhaps it merely seems that our experience of a tune unfolds while the tune is playing. Perhaps in fact all we have, while the tune plays, is a momentary awareness of the sound playing at each instant, and only when the tune is over do we comprehend the tune as a temporal whole. Perhaps, in Dennett's terms, the mechanisms of time consciousness do an Orwellian rewrite of the temporal history of our experience.

[33] Perhaps. Note, however, that there is no particular reason to believe this other than a desire to save computational models in an ad hoc way. We should presumably prefer a kind of explanation which does not require us to suppose that we are systematically deceived by our experience over one that does require this. Note also that the standard strategy employed by computationalists to discount phenomenological evidence, however plausible it might be in other cases, is not persuasive in this particular case. The actual time-course of my experience - how it happens in time - is not so obviously something that is conscious or subconscious in the normal sense. In any case, presumably the phenomenological conclusion can be tested by psychological ("third person") experiments which shed light on the actual temporal frame of experience and can indicate whether our sense of awareness of a temporal object as it unfolds is an illusion or not.

[34] Another kind of response would be to deny that a Meinongian structure is essential to computational models. Certainly, it is possible to imagine some kind of parallel-processing computational architecture which begins pattern recognition from the very moment the auditory event commences. This would be a major structural departure from current computational models, and parallel processing is always much easier envisaged than actually achieved. Perhaps it is not, after all, essential to computational models that they be broadly Meinongian. However, what is essential is that there be two theoretically distinct stages, one in which the auditory pattern is symbolically represented, and one in which algorithms operate on that symbolic representation. It is no accident that this theoretical distinction is universally reflected in the actual temporal structure of computational models; to avoid a Meinonginan structure is to work against the computational grain. In the long run, if what you need in your system is simultaneous coevolution, you may as well go with one that has it as a standard feature rather than with one that can only get it as an expensive aftermarket accessory.

[36] The main claim of this section is the bold one that cognitive science can tell us what retention and protention actually are, and in that sense deepen our understanding of them and of time consciousness in general. The case is parallel to that of genes. The theory of hereditary effectively dictated that there must be some mechanism of transferral of information from one generation to another, and specified the general properties that it must have. It was then up to the molecular biologists to discover what genes actually were, i.e., the chemical compounds and processes which in fact have the general properties. Likewise, Husserl's phenomenological theory specifies that time consciousness requires retention and protention, and the general properties they must have, and cognitive science uncovers the actual mechanisms which underlie time consciousness and instantiate retention and protention. In discovering what retention and protention actually are, we deepen our understanding of the phenomenological theory and may even be able to alter and improve that theory.

[37] When we understand how dynamical models of auditory pattern recognition work, we are already (without realizing it) understanding what retention and protention are. When we look at schematic diagrams of the behavior of these models, we are (without realizing it) already looking at schematic depictions of retention and protention. The key insight is the realization that the state of the system at any given time models awareness of the auditory pattern at that moment, and that state builds the past and the future into the present, just as Husserl saw was required.

[38] How is the past built in? By virtue of the fact that the current position of the system is the culmination of a trajectory which is determined by the particular auditory pattern (type) as it was presented up to that point. In other words, retention is a geometric property of dynamical systems: the particular location the system occupies in the space of possible states when in the process of recognizing the temporal object. It is that location, in its difference with other locations, which "stores" in the system the exact way in which the auditory pattern unfolded in the past. It is how the system "remembers" where it came from.

[39] How is the future built in? Well, note that a dynamical system, by its very nature, continues on a behavioral trajectory from any point. This is true even when there is no longer any external influence. The particular path it follows is determined by its current state in conjunction with its intrinsic behavioral tendencies. Thus, there is a real sense in which the system automatically builds in a future for every state that it happens to occupy. Now, if the current state is one that results from exposure to a particular auditory pattern, the future behavior of the system will itself be sensitive to that exposure. In other words, the system will automatically proceed on a path which (in practice) uniquely reflects the particular auditory pattern that it has heard up to that point. Thus, protention is also a geometric property of dynamical systems - indeed, the very same property as retention. Protention is the location of the current state of the system in the space of possible states, though this time what is relevant about the current state is the way in which, given the system's intrinsic behavioral tendencies, it shapes the future of the system. It is the current location of the state of the system which stores the system's "sense" of where it is going.

[40] The best way to tell whether these identifications are really appropriate is to compare retention and protention on the dynamical interpretation with the list of properties that Husserl ascribed to retention and protention. And in fact, they turn out to fit like hand and glove. Almost every description Husserl gave of retention and protention finds a natural interpretation in terms of the current location of a dynamical system capable of auditory pattern recognition such as the Lexin model. Considering retention first again:

[42] Do these dynamical interpretations reveal what retention and protention actually are, or do they really show there is (after all) no such thing? This is a judgement call; it depends on the extent to which the dynamical models instantiate the properties which Husserl ascribed to retention and protention. My own opinion is that there is such a strong match that the right answer is that cognitive science is giving an alternative account of the very same theoretical postulates. However, this judgement requires rejecting one significant aspect of Husserl's interpretation of time consciousness, namely his idea that time consciousness requires, in some sense, the direct perception of the past and future. Rather, we can now understand better what retention and protention actually are, and can see how they can intend the past and future without perceiving them. Since this was the most problematic aspect of Husserl's account, this demonstrates how cognitive science can not only flesh out phenomenology, but actually improve it.